Last updated September 10, 2025

Amazon Simple Storage Service (S3) is a durable and available store, ideal for storing application content like media files, static assets, and user uploads.

Storing static files elsewhere is crucial for Heroku apps since dynos have an ephemeral filesystem. Whenever you replace a dyno or when it restarts, which happens daily, all files that aren’t part of your application’s slug are lost. Use a storage solution like S3 to offload the storage of static files from your app.

To add S3 to your app without creating an AWS account, see the Bucketeer add-on.

Overview

All files sent to S3 get stored in a bucket. Buckets act as a top-level container, much like a directory, and its name must be unique across all of S3. A single bucket typically stores the files, assets, and uploads for an application. An Access Key ID and a Secret Access Key govern access to the S3 API.

Setting Up S3 for Your Heroku App

Enabling an application to use S3 requires that the application have access to your AWS credentials and the name of the bucket to store files.

It’s recommended to create an IAM user to use its credentials with your Heroku app. Avoid using your root AWS credentials.

Configure Credentials

You can find your S3 credentials in My Security Credentials section of AWS.

Never commit your S3 credentials to version control. Set them in your config vars instead.

Use the heroku config:set to set both keys:

$ heroku config:set AWS_ACCESS_KEY_ID=MY-ACCESS-ID AWS_SECRET_ACCESS_KEY=MY-ACCESS-KEY

Adding config vars and restarting app... done, v21

AWS_ACCESS_KEY_ID => MY-ACCESS-ID

AWS_SECRET_ACCESS_KEY => MY-ACCESS-KEY

Name Your Bucket

Create your S3 bucket in the same region as your Heroku app to take advantage of AWS’s free in-region data transfer rates.

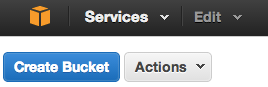

To create a bucket, access the S3 section of the AWS Management Console and create a new bucket in the US Standard region:

Follow AWS’ bucket naming rules to ensure maximum interoperability.

Store the bucket name in a config var to give your application access to its value:

$ heroku config:set S3_BUCKET_NAME=example-app-assets

Adding config vars and restarting app... done, v22

S3_BUCKET_NAME => example-app-assets

Manually Uploading Static Assets

You can manually add static assets such as videos, PDFs, Javascript, CSS, and image files using the command line or the Amazon S3 console.

Uploading Files From a Heroku App

There are two approaches to processing and storing file uploads from a Heroku app to S3: direct and pass-through. See the language guides for specific instructions.

Direct Uploads

In a direct upload, a file uploads to your S3 bucket from a user’s browser, without first passing through your app. Although this method reduces the amount of processing your application needs to perform, it can be more complex to implement. It also limits the ability to modify files before storing them in S3.

Pass-Through Uploads

In a pass-through upload, a file uploads to your app, which in turn uploads it to S3. This method enables you to perform preprocessing on user uploads before you push them to S3. Depending on your chosen language and framework, this method can cause latency issues for other requests while the upload takes place. Use background workers to process uploads to free up your app.

It’s recommended to use background workers for uploading files. Large files uploads in single-threaded, non-evented environments, like Rails, block your application’s web dynos and can cause request timeouts. EventMachine, Node.js and JVM-based languages are less susceptible to such issues.

Language-Specific Guides

Here are language-specific guides to handling uploads to S3:

| Language/Framework | Tutorials |

|---|---|

| Ruby/Rails | |

| Node.js | |

| Python | |

| PHP |

Referring to Your Assets

You can use your assets’ public URLs, such as https://s3.amazonaws.com/bucketname/filename, in your application’s code. S3 directly serves these files, freeing up your application to serve only dynamic requests.

For faster page loads, consider using a content delivery service, such as Amazon Cloudfront to serve your static assets instead.